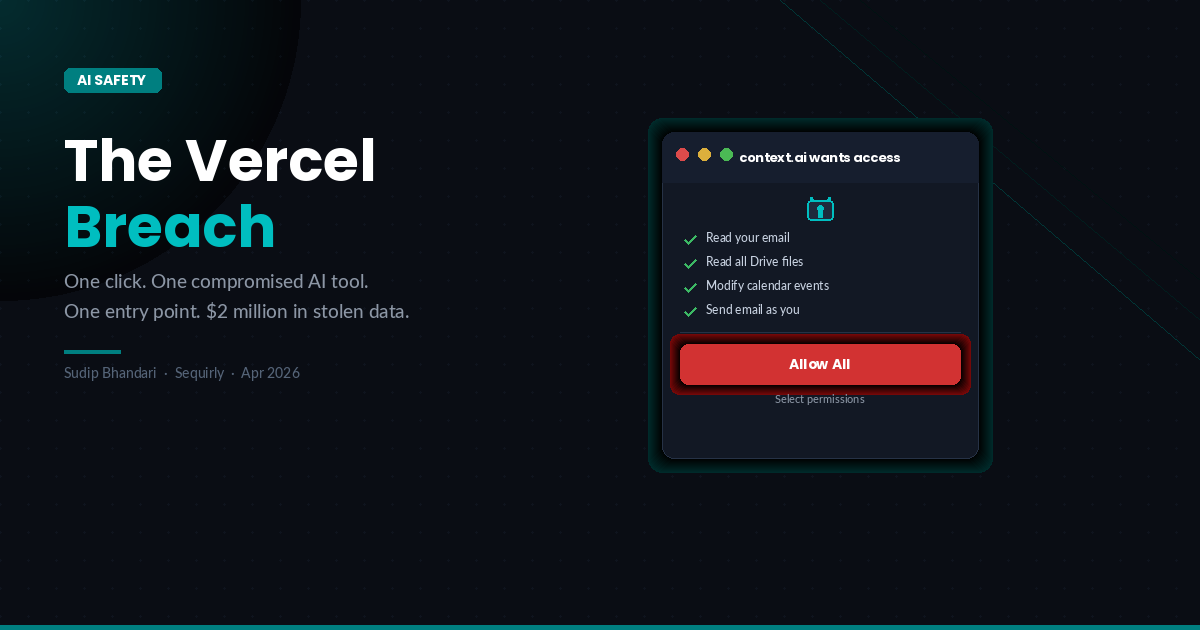

On April 20, Vercel published a security incident bulletin. The short version: a Vercel employee had installed an AI tool called Context.ai using their corporate Google account. When they signed up, they clicked "Allow All" on the OAuth permissions screen, granting the tool unrestricted access to their Google Workspace.

Context.ai had been compromised months earlier.

- In February, one of their employees had downloaded a Roblox auto-farm script that came packaged with Lumma Stealer, an infostealer that quietly lifted credentials from that machine.

- By April, attackers were using those stolen credentials to pivot from Context.ai into the Vercel employee's Google Workspace, then from there into internal Vercel systems.

- They got to environment variables, enumerated them, and are now reportedly selling the data under the ShinyHunters name for $2 million.

Vercel has engaged Mandiant, notified law enforcement, and contacted affected customers. They confirmed that sensitive, encrypted environment variables were not accessed.

That is some relief, though probably cold comfort for the teams whose non-sensitive variables are now in a data dump.

I want to talk about the part of this story that most post-mortems will skip.

The click nobody thought twice about

Put yourself in the Vercel employee's position. You hear about a useful AI tool. You go to sign up. The Google OAuth screen appears. There's a "Select permissions" option and an "Allow All" button. You click Allow All and move on with your day.

That's it. That's the whole attack surface.

Honestly, I've done versions of this. Not with corporate access to sensitive systems, but the behavior - clicking past OAuth screens because you want to get to the tool - is so common it barely registers as a decision. It just feels like the login step.

This is what makes the Vercel breach harder to talk about than a phishing attack or a misconfigured server.

- There's no clear moment of negligence.

- No one downloaded a suspicious attachment.

A person at a well-funded, security-conscious company made an ordinary decision to try a new AI tool, and that decision became the entry point for a significant breach.

AI tools are not designed to make you cautious

The "Allow All" button is there because every AI tool in this category is competing for activation.

Friction during sign-up means fewer conversions. Longer permission dialogs mean more drop-off. The tools are designed to get you through onboarding as fast as possible, and caution is the enemy of speed.

This same logic runs through the entire category.

- ChatGPT accepts whatever you paste without a single warning.

- Google's AI products connect to your entire Drive if you let them.

- Free-tier AI tools often train on your conversations by default, quietly, without surfacing that fact during the sign-up flow.

None of this is accidental. These are product decisions.

The result is that people who are not security engineers, and who have no particular reason to think carefully about what they're granting, are constantly making decisions with real security implications. And they're making them in contexts designed to minimize the thinking involved.

Why "employee education" doesn't fix this

The standard response to incidents like this is some version of: we need better security training. And yes, baseline awareness helps. But there's a ceiling on what training can do when every tool in someone's workflow is actively working to remove their caution.

You cannot train people to be consistently careful in an environment optimized for the opposite.

If the OAuth screen is designed to get you to click through quickly, and everyone around you uses AI tools the same way, and nothing bad has happened yet, then deliberate caution requires sustained effort against the current of normal behavior.

That works for some people some of the time. It does not work reliably across a whole team.

The Vercel employee was not a junior hire who didn't know better. They were working at a company whose entire business is developer infrastructure. The problem isn't knowledge. It's that the tools make low-friction decisions the default, and humans follow defaults.

What your team should actually do

A few practical steps that don't require buying anything.

Start with an audit.

Before you can manage your team's AI tool usage, you need to know what they're actually using. What people are actually using day-to-day.

A simple survey, framed as "help us understand your workflow" rather than "confess your tools," usually surfaces things that never went through any approval process.

Set a rule about work accounts.

The Vercel breach required two things: a compromised third-party tool, and a work account connected to it.

The second one was controllable. A simple policy - "use personal email for personal AI tool trials; only connect work accounts to tools that have gone through a basic vetting process" - would have broken this particular attack chain.

It won't stop everything, but it raises the bar significantly.

Tighten your OAuth habits.

For tools that genuinely need Google Workspace access, "Allow All" is almost never necessary.

Most tools need read access to specific things, not write access to everything. It is worth spending two minutes on the permissions dialog instead of clicking through.

For developers: audit your environment variable sensitivity flags.

Vercel noted that sensitive variables, the ones explicitly flagged, were encrypted and not accessed.

If you have variables you'd be uncomfortable seeing in a data dump, make sure they're flagged correctly.

Create a channel for tool requests.

A lot of shadow AI adoption happens because people don't have an easy way to ask "can I use this tool?"

Approvals that take weeks train people to skip the process. If you can turn around a quick vetting in a day or two, most people will use it.

Where tools like Sequirly fit

I want to be clear about what Sequirly does and what it doesn't do.

Sequirly is a browser extension. It catches sensitive data before it's pasted into AI tools, in the browser, before anything leaves. It covers API keys, credentials, PII, financial data. It would catch the moment a teammate pastes a client's database connection string into ChatGPT.

It would not have stopped the Vercel breach. The entry point there was an OAuth permission grant, not a paste event.

What I'd say is this: the Vercel breach and the things Sequirly catches are expressions of the same underlying pattern. Employees making quick, low-friction decisions with AI tools that have real security implications.

The vector differs. The cause doesn't.

If you want to know what to look for in a tool that addresses the paste side of this: you want something that

- works locally in the browser (not a proxy that routes all your traffic),

- shows metadata to admins without logging actual prompt content (the surveillance trade-off matters for team trust), and

- works without IT configuration so people can actually deploy it.

Sequirly fits those criteria. There are others worth evaluating too. The point is that a layer of tooling in this category is worth having, because training alone has a ceiling.

The Vercel breach will be written up as a supply chain attack, which it is. Context.ai got compromised. Vercel got hit through that connection. The attack chain is real and worth understanding.

But the entry point was an employee making a normal decision.

Until the tools themselves are designed to share some of the caution burden with users, those normal decisions will keep opening doors.

The teams that come out ahead won't be the ones with the most training. They'll be the ones who've made the cautious path as easy as the fast one.

Sources