Five companies. All well-known. All with IT departments, legal teams, and in several cases explicit policies about AI tools.

All leaked data through ChatGPT.

When I read the Samsung story in 2023, I thought about how many times someone on our own team had probably done the same thing. The gap between "we have a policy" and "nothing has leaked" is wider than most teams realize.

Here's the full breakdown.

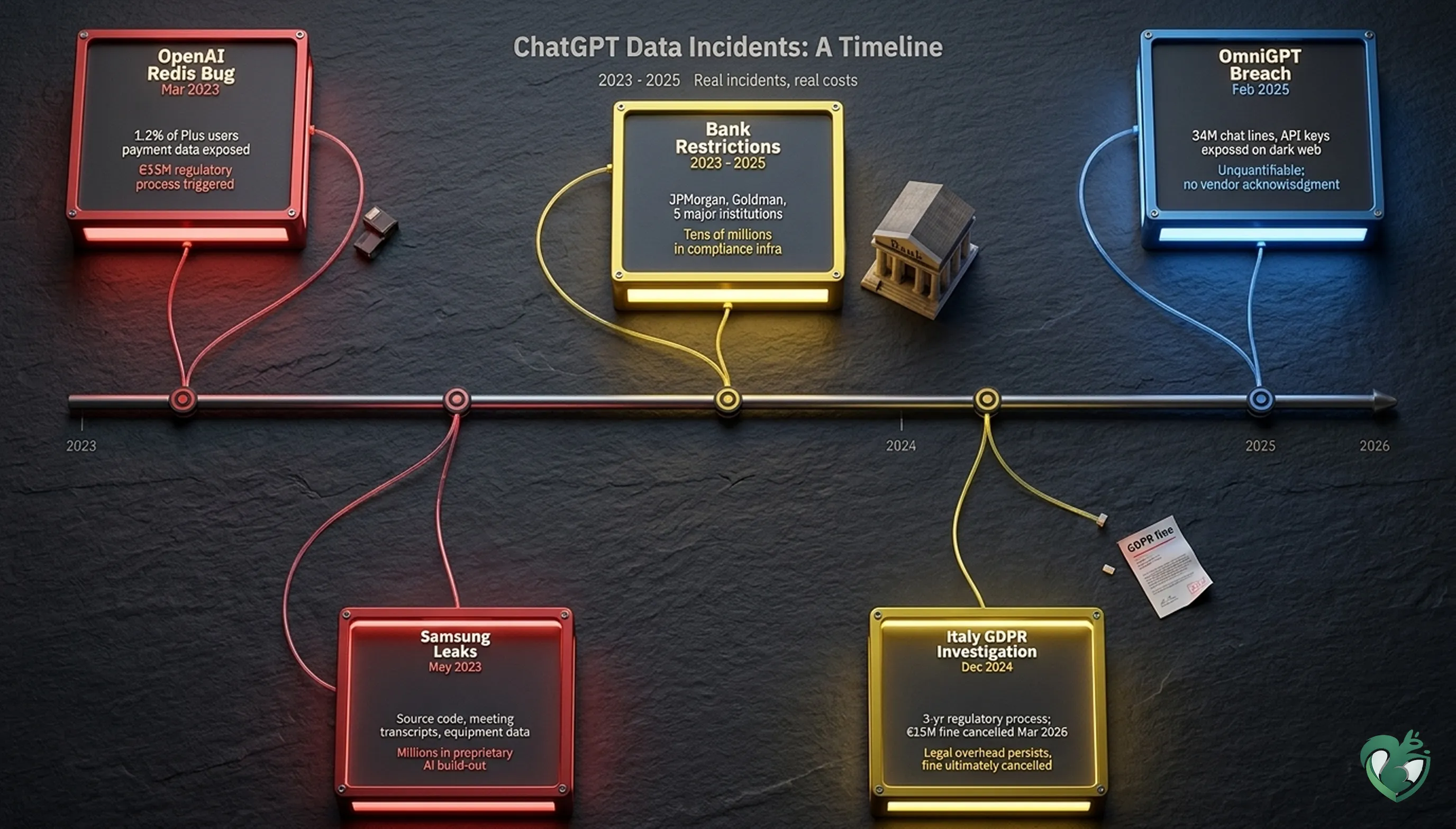

| # | Incident | Year | What was exposed | Estimated cost |

|---|---|---|---|---|

| 1 | Samsung engineers | 2023 | Source code, meeting transcripts, equipment data | Millions (internal AI build) |

| 2 | OpenAI Redis bug | 2023 | Payment data, chat history for 1.2% of Plus users | Triggered €15M regulatory process |

| 3 | Italy GDPR investigation | 2023–2026 | Training data practices | €15M fine (cancelled March 2026) |

| 4 | OmniGPT breach | 2025 | 34M chat lines, API keys, business documents | Unquantifiable |

| 5 | Bank restrictions | 2023 | Financial models, client data, trading strategies | Tens of millions (compliance infrastructure) |

1. Samsung: three leaks in three weeks (May 2023)

Samsung had banned ChatGPT internally. Then they lifted the ban.

Within three weeks, three separate engineers in separate teams had each created their own incident:

- The first pasted proprietary semiconductor source code into ChatGPT asking for optimization help.

- The second uploaded internal equipment data.

- The third submitted a transcript from a confidential internal meeting.

All three worked independently. None knew about the others.

What it cost

Under ChatGPT's default settings at the time, submitted content could be used to improve the model.

Samsung's proprietary source code was potentially ingested into an externally accessible system. Samsung responded by banning external AI tools again and funding the development of Samsung Gauss, a proprietary internal AI system.

Building that kind of infrastructure costs millions. The original ban cost nothing.

The lesson

Samsung lifted the ban because engineers needed the tool. Banning again without providing a safe alternative restarted the same cycle.

2. OpenAI's own bug exposed user payment data (March 2023)

On March 20, 2023, a bug in an open-source Redis client library caused some ChatGPT users to see other users' conversation titles in their sidebar.

The more significant issue: 1.2% of ChatGPT Plus subscribers had payment information exposed.

Exposed data included:

- Full name

- Email address

- Billing address

- Payment card type

- Last four digits of card number

OpenAI took ChatGPT offline for several hours. Not all affected accounts could be confirmed.

What it cost

Italy's data protection authority used this incident to temporarily ban ChatGPT and open a GDPR investigation. That investigation ran for over a year.

It ended with a €15 million fine against OpenAI in December 2024. A Rome court later cancelled that fine in March 2026, though the court has not yet published its reasoning.

A bug that lasted hours triggered a regulatory process that ran for three years. Whether or not fines ultimately stick, the legal overhead does not.

The lesson

Every conversation your team sends to ChatGPT carries platform-level risk. OpenAI shipped a bug that exposed user payment data. Any platform can.

3. Italy's three-year GDPR investigation into OpenAI (2023–2026)

Italy's Garante opened its investigation in 2023, following the Redis bug. In December 2024, it issued a €15 million fine. The core finding: ChatGPT had processed EU users' personal data without a valid legal basis, covering how the platform collected conversation data for model training without adequate consent mechanisms for European users.

A Rome court cancelled that fine in March 2026. The court has not yet published its reasoning.

What it cost

The fine itself is gone. The three years of regulatory overhead are not: legal responses, compliance changes, and Italian market restrictions all had real costs.

OpenAI has since established its European headquarters in Ireland. The Irish Data Protection Commission is now the lead supervisory authority for OpenAI's EU operations. Active investigations by Spain's AEPD and France's CNIL remain open and unresolved.

The lesson

GDPR liability doesn't require a breach. It requires that personal data was processed without proper consent. Whether fines stick or not, the regulatory scrutiny doesn't stop once it starts.

4. OmniGPT: 34 million chat lines on the dark web (February 2025)

OmniGPT was an AI aggregator: one interface to access ChatGPT, Claude, Gemini, and other models. In February 2025, a hacker posted 34 million lines of OmniGPT user conversations to a dark web marketplace.

Based on security researcher reports, the exposed data reportedly included:

- Proprietary business documents

- Personal identification records

- Medical records

- API keys for AWS, Google Cloud, and other services

Over 30,000 user accounts were affected. OmniGPT never issued a public acknowledgment.

What it cost

Every piece of data shared through the platform is permanently exposed.

API keys had to be treated as compromised and rotated immediately. For businesses that uploaded client documents or internal strategy, the exposure has no ceiling.

Most affected users found out through security researchers. Their first warning came after the data was already circulating.

The lesson

Every third-party AI tool is a platform you're trusting with your data.

When your team accesses ChatGPT through an aggregator or wrapper app, they add another point of failure. OmniGPT's silence after the breach shows what happens when that trust breaks. You have almost no recourse.

5. Five major banks restricted ChatGPT after employees shared client data (2023)

In early 2023, multiple major financial institutions restricted employee access to ChatGPT after internal compliance reviews surfaced a pattern of sensitive usage. Reported institutions included, among others:

- JPMorgan Chase

- Goldman Sachs

- Bank of America

- Wells Fargo

- Deutsche Bank

- Citigroup

Staff were using ChatGPT to draft communications and summarize documents. The data going in included financial models, client data, trading strategies, and in some cases material non-public information.

Standard work. Wrong tool.

What it cost

Each institution has since invested in building or licensing compliant internal AI infrastructure.

JPMorgan filed for a patent on an AI-driven financial advisory tool in 2023. Deutsche Bank and Goldman both disclosed AI infrastructure spend in the tens of millions in subsequent earnings reports.

The lesson

JPMorgan, Goldman, and Deutsche Bank had compliance teams, legal departments, and seven-figure security budgets.

Policies alone still didn't stop the behavior. Frictionless tools get used. The question is whether you've made the safe path as easy as the risky one.

What these five incidents have in common

Look at what triggered each one:

- Samsung: engineers optimizing code

- OpenAI bug: a library update in platform infrastructure

- Italy fine: how the platform was designed to handle data

- OmniGPT: a third-party platform breach

- Banks: analysts summarizing client documents

No sophisticated exploits. No targeting. Ordinary work, done with a tool designed to remove all friction.

Training and policy help. They just don't work at 6pm on a deadline, when the tool is one tab over and the ask feels harmless.

Where to start

Share one of these incidents with your team this week. Use it as context, not a warning: this is what actually happens to real companies.

The practical steps for preventing it are in our 7-step guide to preventing AI data leaks: privacy settings, approved tool lists, and the data categories your team should never put into any AI tool.

For the technical layer that catches what policy can't, our guide to AI DLP explains why browser-level detection works when everything else falls short.

And if you want to know how exposed your team's current AI usage is, try Sequirly. Two minutes to install. It starts showing you what's moving through your team's AI tools immediately.