Every AI policy template you find online was written for a legal team.

The 30-page frameworks with steering committees and risk registers were built for organizations with a dedicated compliance department.

For a small business, you need one page, five sections, and a clear owner.

If you fall in the other 77% of companies who haven't thought about AI governance and want to build a policy, this is the guide for you.

Why Most AI Governance Policies Fail

If your policy is a PDF that says to use AI responsibly and was circulated through an email, it has already failed.

Because it now lives in a shared folder nobody opens again.

There are two major reasons why AI governance fails.

The Ban

Samsung banned all generative AI tools company-wide because three engineers leaked source code, meeting transcripts, and internal chip data to ChatGPT inside 20 days.

But a ban without approved alternatives pushes usage underground.

This is the reason why we have been seeing so much news about the shadow AI problem in recent years. And shadow AI breaches cost an average of $670,000 more than standard incidents.

The Policy Nobody Follows

You have a document that says "don't share confidential information."

But nobody defines what that means, and nobody enforces it.

The document gives you legal cover but doesn't change what your team types on a Tuesday afternoon.

A policy that works does something different. It makes the right behavior specific enough that someone under deadline pressure knows in three seconds whether what they're about to paste is allowed.

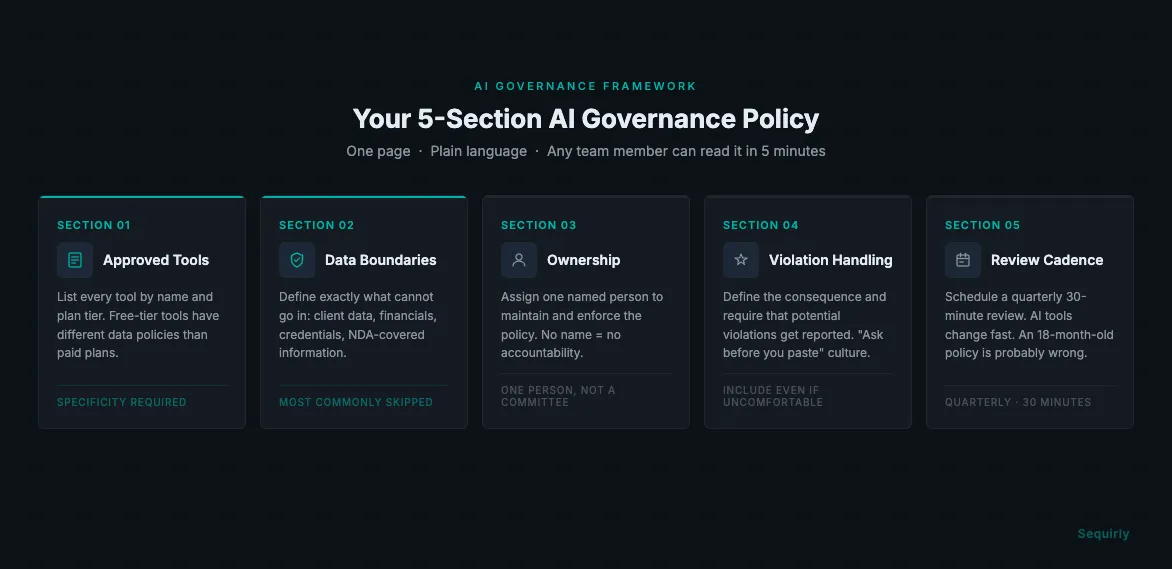

The 5 Sections Every AI Governance Policy Needs

Like I mentioned earlier, you don't need 30 pages.

You need five clear sections any team member can read in five minutes and understand.

1. Approved Tools

Keep it simple. List the tools your team can use for work, and be specific.

Not, "AI tools are permitted."

"ChatGPT Team plan or higher (not the free tier), Claude Pro, and Gemini Workspace." Be specific like this.

The specificity matters. Free-tier ChatGPT, Claude, and Gemini train on user conversations by default, and paid plans have data protections free versions don't.

If someone on your team is using a free AI account for client work, that client's data is going into a training dataset you have no control over.

2. What Data Cannot Go In

This is the most critical section and the most commonly skipped.

Don't write "don't share confidential information."

Write the specific categories. For most teams, that means:

- Client names, contact data, contracts, and deliverables

- Financial data: revenue figures, pricing, projections, payroll

- Source code and credentials (API keys, passwords, environment variables)

- Internal strategy, unreleased products, acquisition or partnership discussions

- Any information covered by a client NDA or confidentiality agreement

People aren't leaking data maliciously. They're trying to finish work.

The policy needs to be concrete enough that someone under deadline pressure knows immediately whether what they're about to paste is allowed.

A useful rule: if you'd hesitate to share it in a public forum, it doesn't go into an AI tool.

3. Who Owns AI Governance

For a team under 50, you don't need a committee.

You need one person who's accountable.

That's usually whoever is closest to both operations and technology: an ops lead, engineering lead, or COO.

Their job is narrow: decide what's on the approved tool list, field questions when team members aren't sure, and run the quarterly review.

Without a name attached to it, nobody enforces the policy and nobody updates it when AI tools change.

4. What Happens When the Policy Is Violated

This section gets avoided because it feels confrontational.

Include it anyway.

You don't need elaborate disciplinary language. Two things: the consequence (usually a direct conversation, occasionally something more serious depending on what was shared) and an expectation that potential violations get reported.

The second part matters more than it seems. Samsung's engineers likely didn't know they were violating a policy because no clear policy existed at the time.

A culture where "when in doubt, ask before you paste" is the norm catches more problems than one that only addresses accountability after something goes wrong.

5. Review Cadence

AI tools change fast. OpenAI keeps adding features that interact with your data in new ways. A policy that's 18 months old is probably wrong about something.

Set a quarterly review on the calendar now.

Thirty minutes to check whether the approved tool list is still accurate and whether the data rules need updating. Don't wait for a problem to trigger it.

What Compliance Actually Asks of Small Teams

The EU AI Act, GDPR, and the growing patchwork of US state AI laws look overwhelming on first read.

For most small teams, the practical baseline is simpler.

Three requirements follow from almost every current framework.

Data minimization. Don't put data into an AI tool you don't need to process. Under GDPR and similar frameworks, personal data should only be processed where there's a clear purpose, and that includes what goes into your AI prompts.

Documentation. If your team uses AI to help make decisions about people (hiring, performance reviews, content moderation), document that use. Know where AI is involved in your decision-making so you can explain it if asked.

Vendor assessment. Your AI tool is a data processor. Know their data handling policies: whether they process data in your jurisdiction, and whether enterprise plans change what they do with your inputs. This is exactly what the approved tool list in your policy formalizes.

For most teams under 50, full EU AI Act obligations don't apply directly today.

What applies is the general accountability principle: know what your team is doing with data, and be able to show you're handling it responsibly.

For the intersection of governance and technical data loss prevention, AI Data Loss Prevention covers the control layer that sits alongside policy.

Why Technical Controls Matter Alongside Policy

You can't monitor every prompt.

You shouldn't try.

What you can do is make the right behavior easy to follow. The approved tool list should be somewhere your team actually looks, not buried in an onboarding doc from six months ago.

Policy tells people what not to do. Technical controls catch the cases where memory fails.

Sequirly sits in the browser and intercepts sensitive data before it's submitted to an AI tool, stopping it before it leaves rather than logging what was sent after the fact. The admin dashboard shows metadata only: which tool, which data category, what action was taken. Not the content of the prompt.

If you want to give it a try, Sequirly is free to start.

If you're not sure what your team is currently sending to AI tools, the AI Security Scanner gives you a baseline before you write the policy. You can't govern what you haven't measured.

For a broader view of what a full security stack looks like for teams under 50, AI Security for Teams covers the controls that sit alongside governance.

Getting the Team to Actually Follow It

Don't send a PDF and ask people to sign it.

Walk through it in 15 minutes at a team meeting.

Explain why each data category is on the list. Give a real example (a client brief, a financial model, a piece of source code) and walk through what "don't paste this" looks like in practice.

If your team pushes back, they're resisting friction, not safety.

A policy that explains _why_ gets more genuine compliance than one that only says _what_. People who understand the reason apply judgment in the edge cases. People who just saw a list won't.

Where to Start with AI Governance Today

If you have no policy: start with section 2. Defining the prohibited data categories in a shared doc your team can find is the highest-leverage first step. It takes an hour and covers the most immediate risk.

If you have a policy nobody reads: simplify it to one page and five sections. Replace the compliance language with something you'd write in a Slack message to your team.

If you want a template to start from, the AI Policy Generator builds a policy tailored to your team's tools and risk profile in about five minutes. It's free, requires no IT involvement, and produces something you can use the same day.